| Stack | Role | Year | Status |

|---|---|---|---|

| React, Node.js, Express, MongoDB, WebSockets, Three.js, React Three Fiber, JWT, Recharts, Framer Motion | Full-Stack Software Engineer | 2026 | Completed |

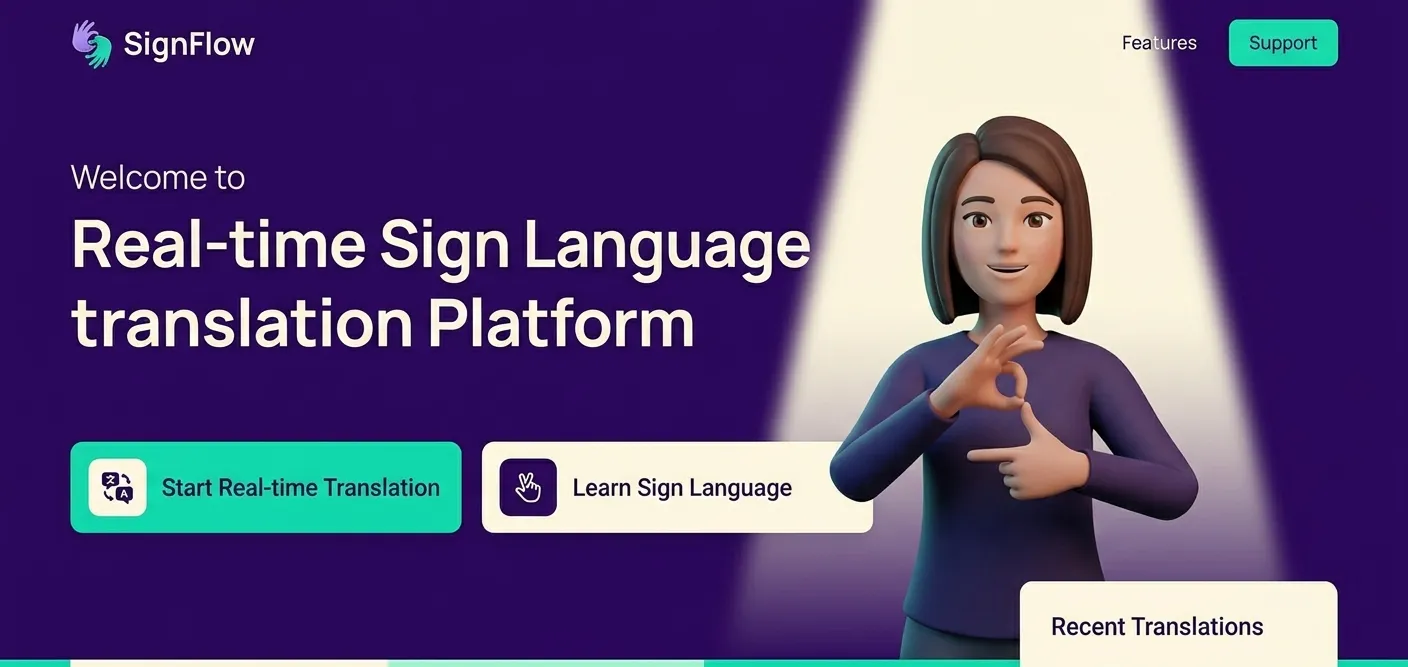

SignFlow - Real-Time Assistive Tech Simulation for Sign Language

Building a high-fidelity assistive technology simulation for real-time sign language translation

SignFlow is a high-fidelity assistive technology platform architected for real-time sign language translation, exploring the architectural requirements for scaling accessibility platforms. The system features WebSocket-powered streaming, 3D avatar simulation with natural variation, and accessibility-first design with WCAG compliance, demonstrating production-grade architecture for specialized user bases.

The Problem

Current text-to-sign demonstrations suffer from robotic user experience with repetition, static nature, latency issues, and UX gaps. Identical animations play for every word without variation, there's a lack of timing, facial expression, or prosody, poor real-time feedback loops, and negligible consideration for accessibility. For assistive technology to be trusted by the Deaf and Hard-of-Hearing community, naturalness and responsiveness are as critical as linguistic correctness.

To move from robotic loops to a responsive, natural-feeling interpreter, I built SignFlow so translation streams in real time and the 3D avatar varies per phrase, with failures in rendering or network isolated from the core experience.

The Solution

I architected SignFlow as a sophisticated assistive technology platform with dynamic logic for per-word and phrase-based animation sequences, live streaming via WebSocket-powered translation for near-zero latency, responsive interface with high-fidelity 3D avatar that reacts to user input, and accessibility-first design with UI built specifically for inclusive user experiences. By applying subtle variations to repeated inputs, SignFlow creates the illusion of a living interpreter rather than a looped animation, demonstrating production-grade architecture for specialized user bases.

Key Technical Terms

- Animation pools (seed-based variation):SignFlow stores multiple animation variants per word or phrase and picks one by seed so repeated words do not look identical; that supports the project goal of natural, human-like signing instead of a single loop.

- WebSocket streaming:Text is sent over a WebSocket and sign sequences stream back so latency stays low; for this assistive product that keeps the experience responsive and real-time without polling.

- 3D rendering isolation:Three.js/React Three Fiber runs in a layer separate from translation and API so a render glitch or heavy frame does not block the translation pipeline or crash the app.

The Impact

Fully implemented assistive technology prototype demonstrating real-time system design using WebSockets, scalable cloud architecture with MongoDB Atlas, intentional UX for specialized user bases, and product-level thinking bridging coding projects and viable market solutions. The platform features high-fidelity 3D avatar simulation, natural variation in animations, comprehensive analytics dashboard, and full WCAG accessibility compliance, showcasing enterprise-grade architecture for accessibility platforms.

Near-zero

Multiple

WCAG Compliant

MongoDB Atlas

Outcomes

- Fully functional assistive technology prototype with production-grade architecture

- Real-time translation system with WebSocket-powered streaming

- High-fidelity 3D avatar with natural variation and micro-expressions

- Comprehensive analytics dashboard with interactive charts and user insights

- Full accessibility compliance with WCAG standards

- Scalable cloud architecture with MongoDB Atlas

Architecture Deep-Dive

SignFlow follows a real-time streaming architecture: React frontend with Three.js (React Three Fiber) for 3D avatar rendering, Node.js/Express backend for translation processing, MongoDB Atlas for cloud-hosted data with indexed collections, and WebSocket connections for near-zero latency streaming. Animation pools store multiple variations per word/phrase, with seed-based selection for natural variation. The architecture separates 3D rendering from translation logic, ensuring smooth performance. Analytics are computed on-demand using MongoDB aggregation pipelines.

Key Engineering Decisions

I chose WebSocket streaming over REST polling because near-zero latency was essential for sign-to-text feel, trading some complexity for real-time responsiveness. I selected Three.js over 2D animations because the immersive 3D avatar better served accessibility and user trust. I implemented animation pools over single animations so signing had natural variation and felt less robotic. I used MongoDB Atlas over self-hosted for cloud scalability and ops simplicity. I deferred voice input and video recording to ship the core translation experience first.

Failure Modes & Resilience

WebSocket disconnect: client reconnects and can resend input so the user is not stuck. 3D render or GPU failure: error boundaries contain the avatar component so the rest of the UI (text, controls) still works and we can show a fallback or retry. Translation service or DB slow: we show loading and timeouts so the user knows the system is working; we do not block the main thread. Missing animation for a word: we fall back to spelling or a default so the experience degrades gracefully instead of breaking.

Outcome & Future Potential

Fully implemented assistive technology prototype demonstrating real-time system design using WebSockets, scalable cloud architecture with MongoDB Atlas, intentional UX for specialized user bases, and product-level thinking bridging coding projects and viable market solutions. The platform features high-fidelity 3D avatar simulation, natural variation in animations, comprehensive analytics dashboard, and full WCAG accessibility compliance, showcasing enterprise-grade architecture for accessibility platforms.

Roadmap & Expansion

Vision includes scaling to 10,000+ concurrent users through WebSocket load balancing, Redis for session management, and CDN integration for 3D assets. Planned containerization with Docker for consistent deployments. Advanced features include AI-powered sign recognition, multiple avatar personalization, sign language learning modules, and community sharing capabilities. Enterprise features include API access for third-party integrations, white-label customization, and comprehensive analytics dashboards.

Near-zero

WebSocket-powered streaming

Multiple

Per-word animation pools with natural variation

WCAG Compliant

Full ARIA support and keyboard navigation

MongoDB Atlas

Cloud-hosted with indexed collections

Project Gallery